K8S项目实践(01): kubeadm安装K8S集群

规划

服务配置

| OS | 配置 | 用途 |

|---|---|---|

| CentOS 7.6 (172.25.140.216) | 2C4G | k8s-master |

| CentOS 7.6 (172.25.140.215) | 2C4G | k8s-work1 |

| CentOS 7.6 (172.25.140.214) | 2C4G | k8s-work2 |

**注**:这是演示 k8s 集群安装的实验环境,配置较低,生产环境中我们的服务器配置至少都是 8C/16G 的基础配置。

版本选择

- CentOS:7.6

- k8s组件版本:1.23.6

一、服务器基础配置

1、 配置主机名

所有节点执行

[root@iZ8vbfafp7g52u8976flc0Z ~]# hostnamectl set-hostname k8s-master

[root@iZ8vbfafp7g52u8976flc1Z ~]# hostnamectl set-hostname k8s-work1

[root@iZ8vbfafp7g52u8976flc2Z ~]# hostnamectl set-hostname k8s-work2

2、关闭防火墙

所有节点执行

1 # 关闭firewalld

2 [root@k8s-master ~]# systemctl stop firewalld

3

4 # 关闭selinux

5 [root@k8s-master ~]# sed -i 's/enforcing/disabled/' /etc/selinux/config

6 [root@k8s-master ~]# setenforce 0

7 setenforce: SELinux is disabled

8 [root@k8s-master ~]#

9

3、互做本地解析

所有节点执行

[root@k8s-master ~]# cat /etc/hosts

172.25.140.216 k8s-master

172.25.140.215 k8s-work1

172.25.140.214 k8s-work2

[root@k8s-master ~]#

4、SSH 免密通信(可选)

所有节点执行

[root@k8s-master ~]# ssh-keygen

Generating public/private rsa key pair.

Enter file in which to save the key (/root/.ssh/id_rsa):

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /root/.ssh/id_rsa.

Your public key has been saved in /root/.ssh/id_rsa.pub.

The key fingerprint is:

SHA256:s+JcU9ctsItBgDC+UwxgmFhnsQGpy9SELkEOx4Lmk/0 root@k8s-master

The key's randomart image is:

+---[RSA 2048]----+

|=*B+Oo .. |

|X=o= *. . |

|+== o o . . |

|o* o o . + . |

|+.. + S o o o .|

|.. E + + . . |

| . + . . |

| o o . |

| o |

+----[SHA256]-----+

[root@k8s-master ~]#

所有节点执行(互发公钥)

[root@k8s-master ~]# ssh-copy-id root@k8s-work1

/usr/bin/ssh-copy-id: INFO: Source of key(s) to be installed: "/root/.ssh/id_rsa.pub"

The authenticity of host 'k8s-work1 (172.25.140.215)' can't be established.

ECDSA key fingerprint is SHA256:BMN7TIKDbFKdG3v1TVHmy3i6BYm7TGS8Hsnu1F9+UkI.

ECDSA key fingerprint is MD5:71:60:e2:6c:38:e2:20:d8:9c:94:77:54:cb:10:33:32.

Are you sure you want to continue connecting (yes/no)? yes

/usr/bin/ssh-copy-id: INFO: attempting to log in with the new key(s), to filter out any that are already installed

/usr/bin/ssh-copy-id: INFO: 1 key(s) remain to be installed -- if you are prompted now it is to install the new keys

root@k8s-work1's password:

Number of key(s) added: 1

Now try logging into the machine, with: "ssh 'root@k8s-work1'"

and check to make sure that only the key(s) you wanted were added.

[root@k8s-master ~]# ssh-copy-id root@k8s-work2

5、加载 br_netfilter 模块

确保 br_netfilter 模块被加载

所有节点执行

1 # 加载模块

2 [root@k8s-master ~]# modprobe br_netfilter

3 ## 查看加载请看

4 [root@k8s-master ~]# lsmod | grep br_netfilter

5 br_netfilter 22256 0

6 bridge 151336 1 br_netfilter

7

8 # 永久生效

9 [root@k8s-master ~]# cat <<EOF | tee /etc/modules-load.d/k8s.conf

10 > br_netfilter

11 > EOF

12 br_netfilter

13 [root@k8s-master ~]#

14

6、允许 iptables 检查桥接流量

所有节点执行

1 [root@k8s-master ~]# cat <<EOF | sudo tee /etc/modules-load.d/k8s.conf

2 > br_netfilter

3 > EOF

4 br_netfilter

5 [root@k8s-master ~]# cat <<EOF | sudo tee /etc/sysctl.d/k8s.conf

6 > net.bridge.bridge-nf-call-ip6tables = 1

7 > net.bridge.bridge-nf-call-iptables = 1

8 > EOF

9 net.bridge.bridge-nf-call-ip6tables = 1

10 net.bridge.bridge-nf-call-iptables = 1

11 [root@k8s-master ~]# sudo sysctl --system

12

7、关闭 swap

所有节点执行

1 # 临时关闭

2 [root@k8s-master ~]# swapoff -a

3

4 # 永久关闭

5 [root@k8s-master ~]# sed -ri 's/.*swap.*/#&/' /etc/fstab

6

8、时间同步

所有节点执行

1 # 同步网络时间

2 [root@k8s-master ~]# ntpdate time.nist.gov

3 23 Nov 22:36:07 ntpdate[12307]: adjust time server 132.163.96.6 offset -0.009024 sec

4

5 [root@k8s-master ~]#

6 # 将网络时间写入硬件时间

7 [root@k8s-master ~]# hwclock --systohc

8

9、安装 Docker

所有节点执行

1、使用 sudo 或 root 权限登录 Centos。

2、确保 yum 包更新到最新。

sudo yum update

3、执行 Docker 安装脚本。

curl -fsSL https://get.docker.com/ | sh

执行这个脚本会添加 docker.repo 源并安装 Docker。

4、启动 Docker 进程。

$ sudo service docker start

5、验证 docker 是否安装成功并在容器中执行一个测试的镜像。

sudo docker run hello-world

[root@k8s-master ~]# sudo docker run hello-world

Hello from Docker!

This message shows that your installation appears to be working correctly.

到此,docker 在 CentOS 系统的安装完成。

10、安装 kubeadm、kubelet

所有节点执行

1、添加 k8s 镜像源

地址:https://developer.aliyun.com/mirror/kubernetes?spm=a2c6h.13651102.0.0.1cd01b116JYQIn

1 [root@k8s-master ~]# cat <<EOF > /etc/yum.repos.d/kubernetes.repo

2 > [kubernetes]

3 > name=Kubernetes

4 > baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

5 > enabled=1

6 > gpgcheck=0

7 > repo_gpgcheck=0

8 > gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

9 > EOF

10 [root@k8s-master ~]#

11

2、建立 k8s YUM 缓存

1 [root@k8s-master ~]# yum makecache

2 已加载插件:fastestmirror

3 Loading mirror speeds from cached hostfile

4 base | 3.6 kB 00:00:00

5 docker-ce-stable | 3.5 kB 00:00:00

6 epel | 4.7 kB 00:00:00

7 extras | 2.9 kB 00:00:00

8 updates | 2.9 kB 00:00:00

9 元数据缓存已建立

10 [root@k8s-master ~]#

11

3、安装 k8s 相关工具

1 # 查看可安装版本

2 [root@k8s-master ~]# yum list kubelet --showduplicates

3

4 ...

5 ...

6 kubelet.x86_64 1.23.0-0 kubernetes

7 kubelet.x86_64 1.23.1-0 kubernetes

8 kubelet.x86_64 1.23.2-0 kubernetes

9 kubelet.x86_64 1.23.3-0 kubernetes

10 kubelet.x86_64 1.23.4-0 kubernetes

11 kubelet.x86_64 1.23.5-0 kubernetes

12 kubelet.x86_64 1.23.6-0 kubernetes

13

14 # 开始安装(指定你要安装的版本)

15 [root@k8s-master ~]# yum install -y kubelet-1.23.6 kubeadm-1.23.6 kubectl-1.23.6

16

17 # 设置开机自启动并启动kubelet(kubelet由systemd管理)

18 [root@k8s-master ~]# systemctl enable kubelet && systemctl start kubelet

19

二、Master 节点

1、k8s 初始化

Master 节点执行

1 [root@k8s-master ~]# kubeadm init \

2 --apiserver-advertise-address=172.25.140.216 \

3 --image-repository registry.aliyuncs.com/google_containers \

4 --kubernetes-version v1.23.6 \

5 --service-cidr=10.96.0.0/12 \

6 --pod-network-cidr=10.244.0.0/16 \

7 --ignore-preflight-errors=all

8

参数说明:

1 --apiserver-advertise-address # 集群master地址

2 --image-repository # 指定k8s镜像仓库地址

3 --kubernetes-version # 指定K8s版本(与kubeadm、kubelet版本保持一致)

4 --service-cidr # Pod统一访问入口

5 --pod-network-cidr # Pod网络(与CNI网络保持一致)

6

初始化后输出内容:

1 ...

2 ...

3 [addons] Applied essential addon: CoreDNS

4 [addons] Applied essential addon: kube-proxy

5

6 Your Kubernetes control-plane has initialized successfully!

7

8 To start using your cluster, you need to run the following as a regular user:

9

10 mkdir -p $HOME/.kube

11 sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

12 sudo chown $(id -u):$(id -g) $HOME/.kube/config

13

14 Alternatively, if you are the root user, you can run:

15

16 export KUBECONFIG=/etc/kubernetes/admin.conf

17

18 You should now deploy a pod network to the cluster.

19 Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

20 https://kubernetes.io/docs/concepts/cluster-administration/addons/

21

22 Then you can join any number of worker nodes by running the following on each as root:

23

24 kubeadm join 172.25.140.216:6443 --token 8d9mk7.08nyz6xc2d5boiy8 \

25 --discovery-token-ca-cert-hash sha256:45542b0b380a8f959e5bc93f6dd7d1c5c78b202ff1a3eea5c97804549af9a12e

26

2、根据输出提示创建相关文件

Master 节点执行

1 [root@k8s-master ~]# mkdir -p $HOME/.kube

2 [root@k8s-master ~]# cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

3 [root@k8s-master ~]# chown $(id -u):$(id -g) $HOME/.kube/config

4 [root@k8s-master ~]#

5

3、查看 k8s 运行的容器

Master 节点执行

1 root@k8s-master ~]# kubectl get pods -n kube-system -o wide

2 NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

3 coredns-6d8c4cb4d-ck2x5 0/1 Pending 0 8m4s <none> <none> <none> <none>

4 coredns-6d8c4cb4d-mbctj 0/1 Pending 0 8m4s <none> <none> <none> <none>

5 etcd-k8s-master 1/1 Running 0 8m18s 172.25.140.216 k8s-master <none> <none>

6 kube-apiserver-k8s-master 1/1 Running 0 8m18s 172.25.140.216 k8s-master <none> <none>

7 kube-controller-manager-k8s-master 1/1 Running 0 8m18s 172.25.140.216 k8s-master <none> <none>

8 kube-proxy-r84lg 1/1 Running 0 8m4s 172.25.140.216 k8s-master <none> <none>

9 kube-scheduler-k8s-master 1/1 Running 0 8m18s 172.25.140.216 k8s-master <none> <none>

10 [root@k8s-master ~]#

11

4、查看 k8s 节点

Master 节点执行

1 [root@k8s-master ~]# kubectl get node -o wide

2 NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

3 k8s-master NotReady control-plane,master 11m v1.23.6 172.25.140.216 <none> CentOS Linux 7 (Core) 3.10.0-957.21.3.el7.x86_64 docker://20.10.21

4 [root@k8s-master ~]#

5

可看到当前只有 k8s-master 节点,而且状态是 NotReady(未就绪),因为我们还没有部署网络插件(kubectl apply -f [podnetwork].yaml),于是接着部署容器网络(CNI)。

5、容器网络(CNI)部署

Master 节点执行

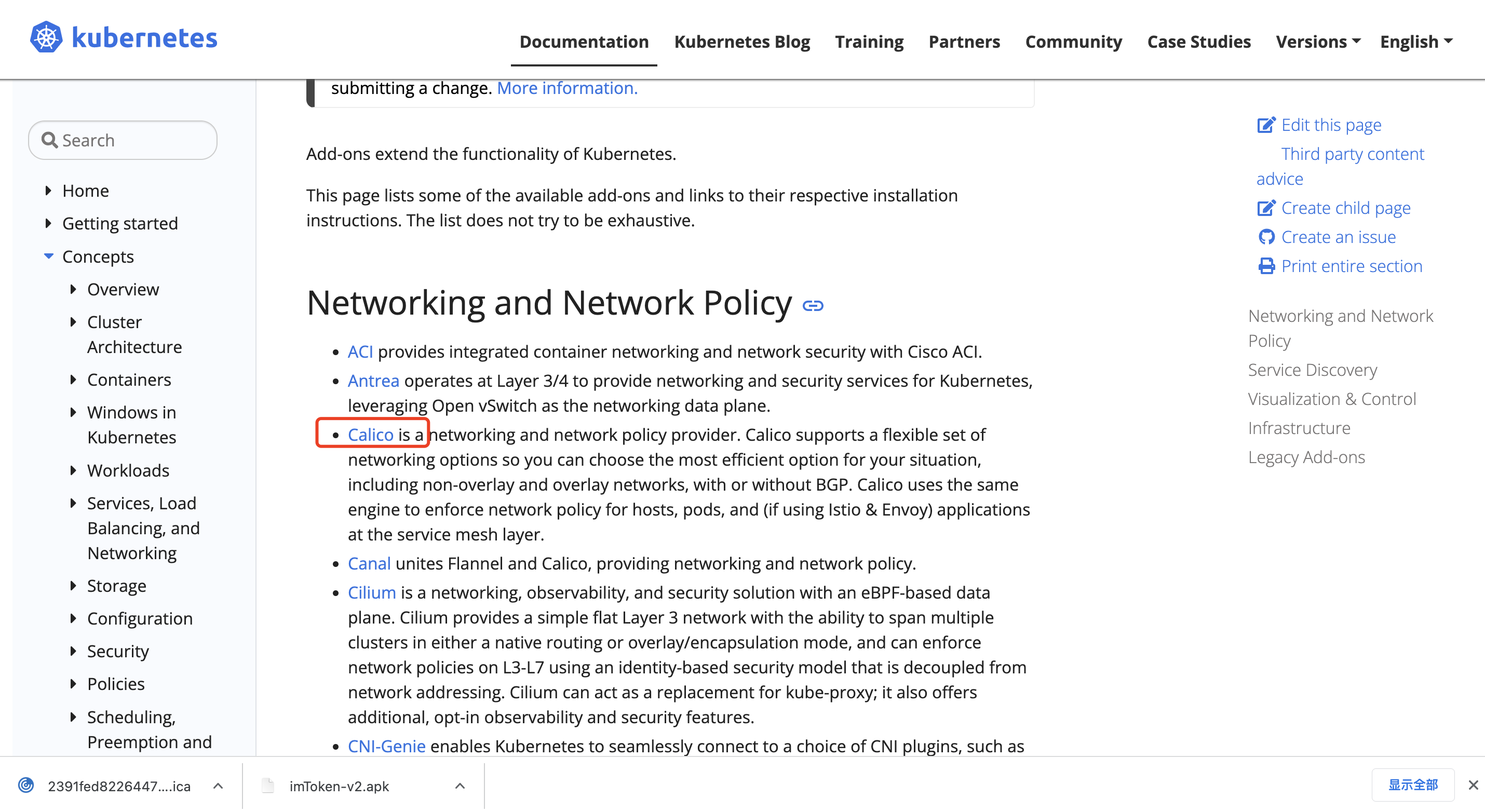

插件地址:https://kubernetes.io/docs/concepts/cluster-administration/addons/

该地址在 k8s-master 初始化成功时打印出来。

1、选择一个主流的容器网络插件部署(Calico)

2、下载yml文件

[root@k8s-master kubeadm-install-k8s]# wget https://docs.projectcalico.org/manifests/calico.yaml --no-check-certificate

3、根据初始化的输出提示执行启动指令

1 [root@k8s-master kubeadm-install-k8s]# kubectl apply -f calico.yaml

2 poddisruptionbudget.policy/calico-kube-controllers created

3 serviceaccount/calico-kube-controllers created

4 serviceaccount/calico-node created

5 configmap/calico-config created

6 customresourcedefinition.apiextensions.k8s.io/bgpconfigurations.crd.projectcalico.org created

7 customresourcedefinition.apiextensions.k8s.io/bgppeers.crd.projectcalico.org created

8 customresourcedefinition.apiextensions.k8s.io/blockaffinities.crd.projectcalico.org created

9 customresourcedefinition.apiextensions.k8s.io/caliconodestatuses.crd.projectcalico.org created

10 customresourcedefinition.apiextensions.k8s.io/clusterinformations.crd.projectcalico.org created

11 customresourcedefinition.apiextensions.k8s.io/felixconfigurations.crd.projectcalico.org created

12 customresourcedefinition.apiextensions.k8s.io/globalnetworkpolicies.crd.projectcalico.org created

13 customresourcedefinition.apiextensions.k8s.io/globalnetworksets.crd.projectcalico.org created

14 customresourcedefinition.apiextensions.k8s.io/hostendpoints.crd.projectcalico.org created

15 customresourcedefinition.apiextensions.k8s.io/ipamblocks.crd.projectcalico.org created

16 customresourcedefinition.apiextensions.k8s.io/ipamconfigs.crd.projectcalico.org created

17 customresourcedefinition.apiextensions.k8s.io/ipamhandles.crd.projectcalico.org created

18 customresourcedefinition.apiextensions.k8s.io/ippools.crd.projectcalico.org created

19 customresourcedefinition.apiextensions.k8s.io/ipreservations.crd.projectcalico.org created

20 customresourcedefinition.apiextensions.k8s.io/kubecontrollersconfigurations.crd.projectcalico.org created

21 customresourcedefinition.apiextensions.k8s.io/networkpolicies.crd.projectcalico.org created

22 customresourcedefinition.apiextensions.k8s.io/networksets.crd.projectcalico.org created

23 clusterrole.rbac.authorization.k8s.io/calico-kube-controllers created

24 clusterrole.rbac.authorization.k8s.io/calico-node created

25 clusterrolebinding.rbac.authorization.k8s.io/calico-kube-controllers created

26 clusterrolebinding.rbac.authorization.k8s.io/calico-node created

27 daemonset.apps/calico-node created

28 deployment.apps/calico-kube-controllers created

29 [root@k8s-master kubeadm-install-k8s]#

30

4、看看该yaml文件所需要启动的容器

1 [root@k8s-master kubeadm-install-k8s]# cat calico.yaml |grep image

2 image: docker.io/calico/cni:v3.24.5

3 imagePullPolicy: IfNotPresent

4 image: docker.io/calico/cni:v3.24.5

5 imagePullPolicy: IfNotPresent

6 image: docker.io/calico/node:v3.24.5

7 imagePullPolicy: IfNotPresent

8 image: docker.io/calico/node:v3.24.5

9 imagePullPolicy: IfNotPresent

10 image: docker.io/calico/kube-controllers:v3.24.5

11 imagePullPolicy: IfNotPresent

12 [root@k8s-master kubeadm-install-k8s] #

13

5、查看容器是否都 Running

1 [root@k8s-master kubeadm-install-k8s]# kubectl get pods -n kube-system -o wide

2 NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

3 calico-kube-controllers-7b8458594b-pdx5z 1/1 Running 0 4m37s 10.244.235.194 k8s-master <none> <none>

4 calico-node-t8hhf 1/1 Running 0 4m37s 172.25.140.216 k8s-master <none> <none>

5 coredns-6d8c4cb4d-ck2x5 1/1 Running 0 32m 10.244.235.195 k8s-master <none> <none>

6 coredns-6d8c4cb4d-mbctj 1/1 Running 0 32m 10.244.235.193 k8s-master <none> <none>

7 etcd-k8s-master 1/1 Running 0 32m 172.25.140.216 k8s-master <none> <none>

8 kube-apiserver-k8s-master 1/1 Running 0 32m 172.25.140.216 k8s-master <none> <none>

9 kube-controller-manager-k8s-master 1/1 Running 0 32m 172.25.140.216 k8s-master <none> <none>

10 kube-proxy-r84lg 1/1 Running 0 32m 172.25.140.216 k8s-master <none> <none>

11 kube-scheduler-k8s-master 1/1 Running 0 32m 172.25.140.216 k8s-master <none> <none>

12 [root@k8s-master kubeadm-install-k8s]#

13

三、work 节点

1、work 节点加入 k8s 集群

所有 work 节点执行

1 # 复制k8s-master初始化屏幕输出的语句并在work节点执行

2 [root@k8s-work1 ~]# kubeadm join 172.25.140.216:6443 --token 8d9mk7.08nyz6xc2d5boiy8 --discovery-token-ca-cert-hash sha256:45542b0b380a8f959e5bc93f6dd7d1c5c78b202ff1a3eea5c97804549af9a12e

3 [root@k8s-work2 ~]# kubeadm join 172.25.140.216:6443 --token 8d9mk7.08nyz6xc2d5boiy8 --discovery-token-ca-cert-hash sha256:45542b0b380a8f959e5bc93f6dd7d1c5c78b202ff1a3eea5c97804549af9a12e

4

5 preflight] Running pre-flight checks

6 [preflight] Reading configuration from the cluster...

7 [preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -o yaml'

8 [kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

9 [kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

10 [kubelet-start] Starting the kubelet

11 [kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap...

12

13 This node has joined the cluster:

14 * Certificate signing request was sent to apiserver and a response was received.

15 * The Kubelet was informed of the new secure connection details.

16

17 Run 'kubectl get nodes' on the control-plane to see this node join the cluster.

18

19 [root@k8s-work2 ~]#

20

2、查询集群节点

Master 节点执行

1 [root@k8s-master kubeadm-install-k8s]# kubectl get node -o wide

2 NAME STATUS ROLES AGE VERSION INTERNAL-IP EXTERNAL-IP OS-IMAGE KERNEL-VERSION CONTAINER-RUNTIME

3 k8s-master Ready control-plane,master 50m v1.23.6 172.25.140.216 <none> CentOS Linux 7 (Core) 3.10.0-957.21.3.el7.x86_64 docker://20.10.21

4 k8s-work1 Ready <none> 9m50s v1.23.6 172.25.140.215 <none> CentOS Linux 7 (Core) 3.10.0-957.21.3.el7.x86_64 docker://20.10.21

5 k8s-work2 Ready <none> 2m41s v1.23.6 172.25.140.214 <none> CentOS Linux 7 (Core) 3.10.0-957.21.3.el7.x86_64 docker://20.10.21

6 [root@k8s-master kubeadm-install-k8s]#

7

都为就绪状态了

四、验证

k8s 集群部署 nginx 服务,并通过浏览器进行访问验证。

1、创建 pod

1 [root@k8s-master kubeadm-install-k8s]# kubectl create deployment nginx --image=nginx

2 deployment.apps/nginx created

3 [root@k8s-master kubeadm-install-k8s]# kubectl expose deployment nginx --port=80 --type=NodePort

4 service/nginx exposed

5 [root@k8s-master kubeadm-install-k8s]# kubectl get pod,svc

6 NAME READY STATUS RESTARTS AGE

7 pod/nginx-85b98978db-qkd6h 1/1 Running 0 36s

8

9 NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

10 service/kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 54m

11 service/nginx NodePort 10.108.180.91 <none> 80:31648/TCP 15s

12 [root@k8s-master kubeadm-install-k8s]#

13

2、访问 Nginx

1 [root@k8s-master kubeadm-install-k8s]# curl 10.108.180.91:80

2 <!DOCTYPE html>

3 <html>

4 <head>

5 <title>Welcome to nginx!</title>

6 <style>

7 html { color-scheme: light dark; }

8 body { width: 35em; margin: 0 auto;

9 font-family: Tahoma, Verdana, Arial, sans-serif; }

10 </style>

11 </head>

12 <body>

13 <h1>Welcome to nginx!</h1>

14 <p>If you see this page, the nginx web server is successfully installed and

15 working. Further configuration is required.</p>

16

17 <p>For online documentation and support please refer to

18 <a href="http://nginx.org/">nginx.org</a>.<br/>

19 Commercial support is available at

20 <a href="http://nginx.com/">nginx.com</a>.</p>

21

22 <p><em>Thank you for using nginx.</em></p>

23 </body>

24 </html>

25 [root@k8s-master kubeadm-install-k8s]#

26

至此:kubeadm方式的k8s集群已经部署完成。

FAQ

1、k8s编译报错

1 ...

2 ...

3 [kubelet-check] It seems like the kubelet isn't running or healthy.

4 [kubelet-check] The HTTP call equal to 'curl -sSL http://localhost:10248/healthz' failed with error: Get "http://localhost:10248/healthz": dial tcp [::1]:10248: connect: connection refused.

5

6 Unfortunately, an error has occurred:

7 timed out waiting for the condition

8

9 This error is likely caused by:

10 - The kubelet is not running

11 - The kubelet is unhealthy due to a misconfiguration of the node in some way (required cgroups disabled)

12

13 If you are on a systemd-powered system, you can try to troubleshoot the error with the following commands:

14 - 'systemctl status kubelet'

15 - 'journalctl -xeu kubelet'

16

17 Additionally, a control plane component may have crashed or exited when started by the container runtime.

18 To troubleshoot, list all containers using your preferred container runtimes CLI.

19

20 Here is one example how you may list all Kubernetes containers running in docker:

21 - 'docker ps -a | grep kube | grep -v pause'

22 Once you have found the failing container, you can inspect its logs with:

23 - 'docker logs CONTAINERID'

24

25 error execution phase wait-control-plane: couldn't initialize a Kubernetes cluster

26 To see the stack trace of this error execute with --v=5 or higher

27

28

查看日志

Apr 26 20:33:30 test3 kubelet: I0426 20:33:30.588349 21936 docker_service.go:264] "Docker Info" dockerInfo=&{ID:2NSH:KJPQ:XOKI:5XHN:ULL3:L4LG:SXA4:PR6J:DITW:HHCF:2RKL:U2NJ Containers:0 ContainersRunning:0 ContainersPaused:0 ContainersStopped:0 Images:7 Driver:overlay2 DriverStatus:[[Backing Filesystem extfs] [Supports d_type true] [Native Overlay Diff true]] SystemStatus:[] Plugins:{Volume:[local] Network:[bridge host macvlan null overlay] Authorization:[] Log:[awslogs fluentd gcplogs gelf journald json-file logentries splunk syslog]} MemoryLimit:true SwapLimit:true KernelMemory:true KernelMemoryTCP:false CPUCfsPeriod:true CPUCfsQuota:true CPUShares:true CPUSet:true PidsLimit:false IPv4Forwarding:true BridgeNfIptables:true BridgeNfIP6tables:true Debug:false NFd:24 OomKillDisable:true NGoroutines:45 SystemTime:2022-04-26T20:33:30.583063427+08:00 LoggingDriver:json-file CgroupDriver:cgroupfs CgroupVersion: NEventsListener:0 KernelVersion:3.10.0-1160.59.1.el7.x86_64 OperatingSystem:CentOS Linux 7 (Core) OSVersion: OSType:linux Architecture:x86_64 IndexServerAddress:https://index.docker.io/v1/ RegistryConfig:0xc000263340 NCPU:2 MemTotal:3873665024 GenericResources:[] DockerRootDir:/var/lib/docker HTTPProxy: HTTPSProxy: NoProxy: Name:k8s-master Labels:[] ExperimentalBuild:false ServerVersion:18.06.3-ce ClusterStore: ClusterAdvertise: Runtimes:map[runc:{Path:docker-runc Args:[] Shim:<nil>}] DefaultRuntime:runc Swarm:{NodeID: NodeAddr: LocalNodeState:inactive ControlAvailable:false Error: RemoteManagers:[] Nodes:0 Managers:0 Cluster:<nil> Warnings:[]} LiveRestoreEnabled:false Isolation: InitBinary:docker-init ContainerdCommit:{ID:468a545b9edcd5932818eb9de8e72413e616e86e Expected:468a545b9edcd5932818eb9de8e72413e616e86e} RuncCommit:{ID:a592beb5bc4c4092b1b1bac971afed27687340c5 Expected:a592beb5bc4c4092b1b1bac971afed27687340c5} InitCommit:{ID:fec3683 Expected:fec3683} SecurityOptions:[name=seccomp,profile=default] ProductLicense: DefaultAddressPools:[] Warnings:[]}

Apr 26 20:33:30 test3 kubelet: E0426 20:33:30.588383 21936 server.go:302] "Failed to run kubelet" err="failed to run Kubelet: misconfiguration: kubelet cgroup driver: \"systemd\" is different from docker cgroup driver: \"cgroupfs\""

看报错的最后解释kubelet cgroup driver: \“systemd\” is different from docker cgroup driver: \“cgroupfs\”“很明显 kubelet 与 Docker 的 cgroup 驱动程序不同,kubelet 为 systemd,而 Docker 为 cgroupfs。

简单查看一下docker驱动:

[root@k8s-master opt]# docker info |grep Cgroup

Cgroup Driver: cgroupfs

解决方案

# 重置初始化

[root@k8s-master ~]# kubeadm reset

# 删除相关配置文件

[root@k8s-master ~]# rm -rf $HOME/.kube/config && rm -rf $HOME/.kube

# 修改 Docker 驱动为 systemd(即"exec-opts": ["native.cgroupdriver=systemd"])

[root@k8s-master opt]# cat /etc/docker/daemon.json

{

"registry-mirrors": ["https://q1rw9tzz.mirror.aliyuncs.com"],

"exec-opts": ["native.cgroupdriver=systemd"]

}

# 重启 Docker

[root@k8s-master opt]# systemctl daemon-reload

[root@k8s-master opt]# systemctl restart docker.service

# 再次初始化k8s即可

[root@k8s-master ~]# kubeadm init ...

2、work 节点加入 k8s 集群报错

报错1:

1 accepts at most 1 arg(s), received 3

2 To see the stack trace of this error execute with --v=5 or higher

3

原因:命令不对,我是直接复制粘贴 k8s-master 初始化的终端输出结果,导致报错,所以最好先复制到 txt 文本下修改好格式再粘贴执行。

报错2:

1 ...

2 [kubelet-check] The HTTP call equal to 'curl -sSL http://localhost:10248/healthz' failed with error: Get "http://localhost:10248/healthz": dial tcp 127.0.0.1:10248: connect: connection refused.

3

解决方法同Master

参考

[01]kubeadm 部署 k8s 集群

[02]kubeadm系列-00-overview

作者:mospan

微信关注:墨斯潘園

本文出处:http://mospany.github.io/2022/11/23/kubeadm-install-k8s/

文章版权归本人所有,欢迎转载,但未经作者同意必须保留此段声明,且在文章页面明显位置给出原文连接,否则保留追究法律责任的权利。